Publication News

I am thrilled to announce that our paper, F3DGS: Federated 3D Gaussian Splatting for Decentralized Multi-Agent World Modeling, has been officially accepted to CVPR 2026 SPAR3D Workshop!

Read the PaperIf you have been following the world of computer vision and robotics, you know that 3D Gaussian Splatting (3DGS) has completely changed the game for rendering photorealistic 3D scenes at lightning-fast speeds. But as we push to deploy fleets of autonomous robots in the real world, a massive roadblock has emerged. I am excited to share how our new framework, F3DGS, breaks right through it by tackling decentralized multi-agent 3D reconstruction.

The Problem: The Centralized Bottleneck

Right now, if you want to build a beautiful 3DGS map, the system is greedy. It demands centralized access to every single piece of training data. If you have a team of robots exploring a building, they currently have to beam all their high-resolution images back to a single main server to stitch the world together.

In the real world, this centralized approach instantly hits three brick walls:

- Communication Overhead: Sending thousands of high-res images over a local network quickly maxes out bandwidth and storage limits.

- Privacy Risks: In many scenarios, sharing raw sensor data is not just difficult; it is complex to enforce due to strict data-sharing policies.

- Scalability Limitations: Processing everything in one place creates a computational traffic jam that scales poorly as you add more robots to the fleet.

The Solution: Federated 3D Gaussian Splatting (F3DGS)

To solve this, we brought the concept of Federated Learning into the 3DGS universe.

The core philosophy of F3DGS is simple: keep the data local, and share only the updates. Instead of sending heavy raw images, our robots process their own observations locally and only send lightweight model updates back to the server.

However, doing this with 3D map coordinates usually causes "geometric drift", the map gets scrambled as different robots optimize positions independently. Here is our secret sauce for fixing that:

- Building a Frozen Scaffold: Before the robots start painting the details, we use LiDAR point clouds to build a globally shared, fixed geometric skeleton of the world.

- Decoupling Appearance from Geometry: During the learning process, the physical 3D positions of the map are locked. The robots are only allowed to update the appearance attributes — things like color, opacity, and scale, using their local data. This guarantees the map stays perfectly aligned without geometric drift.

- Visibility-Aware Merging: When the central server combines everyone's updates, it doesn't average them blindly. It uses a smart weighting system that gives more influence to the robot that had the clearest, most frequent view of a specific area.

The Results

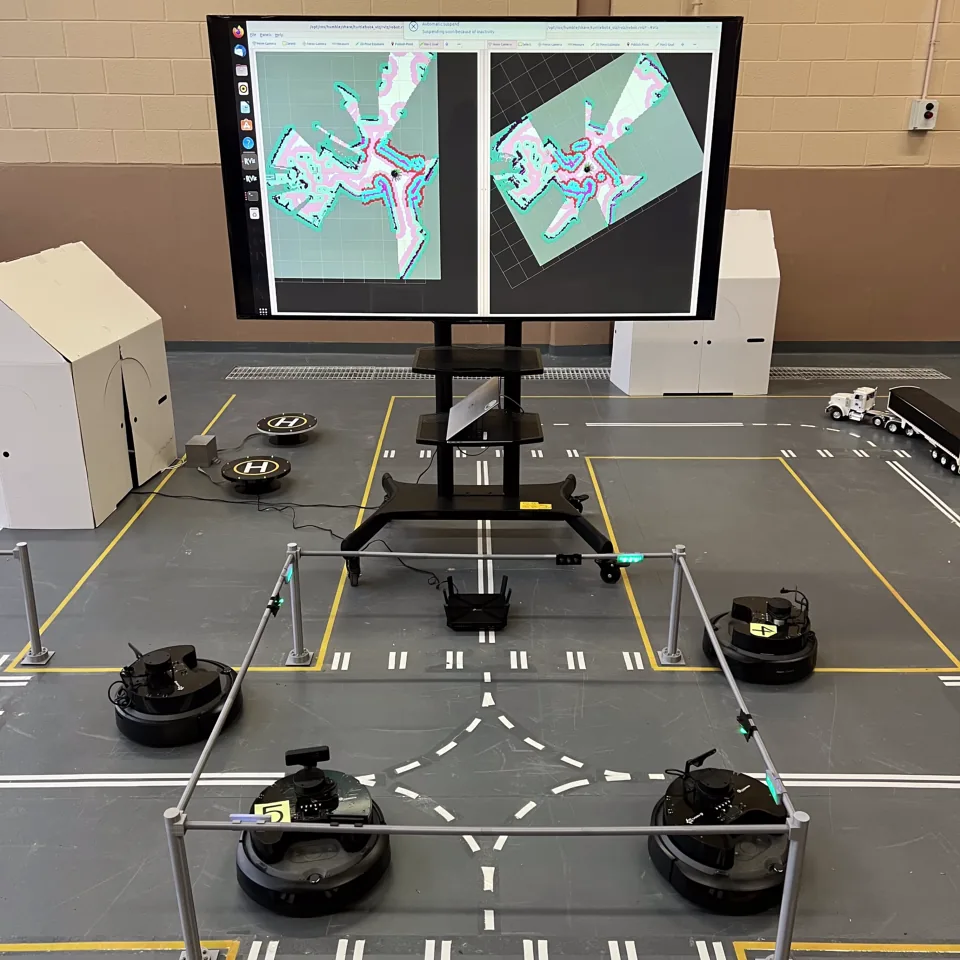

We tested F3DGS using a mobile robot equipped with synchronized LiDAR, RGB, and IMU measurements across multiple indoor sequences. The results were incredibly exciting. F3DGS achieved 3D reconstruction quality that is comparable to traditional, centralized training. We proved that you can successfully map a decentralized world without ever forcing agents to share their raw data.

What's Next?

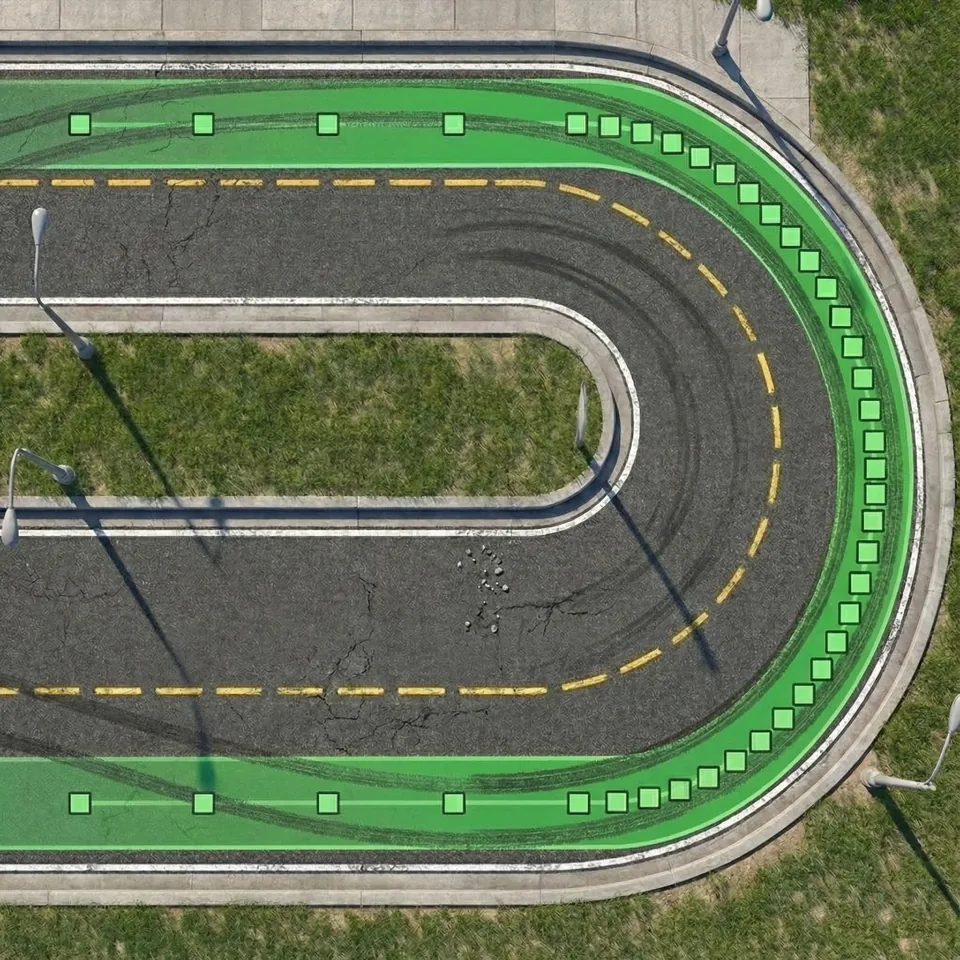

To help push this field forward, we are publicly releasing our source code, development kit, and the new multi-sequence "MeanGreen" dataset.

Stay tuned for the official repository links!